It is possible to map IO devices to cacheable memory on at least some processors, but the accesses have to be very carefully controlled to keep within the capabilities of the hardware - some of the transactions to cacheable memory can map to IO transactions and some cannot. The notes below provide an introduction to some of the issues…. So even if the latency to the IO device were the same as the latency to memory, using cache-line accesses could easily be (for example) 64 times as fast as using uncached accesses - 8 concurrent transfers of 64 Bytes using cache-line accesses versus one serialized transfer of 8 Bytes.īut is it possible to get modern processors to use their cache-line access mechanisms to read data from MMIO addresses? The answer is a resounding, “ yes, but….“.

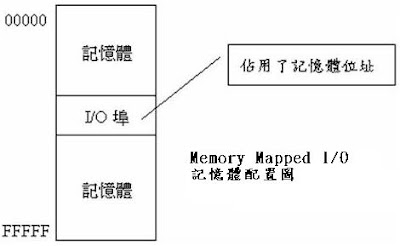

When executing loads to uncached address ranges (such as MMIO ranges), each read fetches only the specific bits requested (1, 2, 4, or 8 Bytes), and all reads to uncached address ranges are completely serialized with respect to each other and with respect to any other memory references. Such reads transfer data in cache-line-sized blocks (64 Bytes on x86 architectures) and can support multiple concurrent read transactions for high throughput. Processors only support high-performance reads when executing loads to cached address ranges. As discussed below, it is generally possible to set up an MMIO mapping that allows high-performance writes to IO space, but setting up mappings that allow high-performance reads from IO space is much more problematic. The fundamental capability exists in all modern processors through the feature called “Memory-Mapped IO” (MMIO), but for historical reasons this provides the desired functionality without the desired performance. This leads to ugly, high-latency implementations in which the processor has to program the IO unit to perform the required DMA transfers and then interrupt the processor when the transfers are complete.įor tightly-coupled acceleration, it would be nice to have the option of having the processor directly read and write to memory locations on the IO device. These asymmetries are often surprising - the tremendously complex processor is actually less capable of generating precisely controlled high-performance IO transactions than the simpler IO device.

When attempting to build heterogeneous computers with “accelerators” or “coprocessors” on PCIe interfaces, one quickly runs into asymmetries between the data transfer capabilities of processors and IO devices.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed